Most people associate the word “nuclear” with two things–weapons and meltdowns, neither of which are good news. Despite the extensive progress atomic energy researchers have made in designing safer reactors, nuclear accidents still occur–most recently in 2011 at Fukushima after a magnitude 9.0 earthquake off the coast of Japan. The cleanup process and 40-year plan to decommission the plant has involved giant underground walls of ice and the non-stop construction of enormous steel storage tanks full of radioactive water that no one really knows what to do with. So why aren’t our power plants getting safer?

To properly understand the answer to that question, we need to rewind to a time when nuclear energy was the future. During the “Atomic Age” of the 1950s, it was impossible to escape starry-eyed predictions that this miracle technology would transform society. “The very idea of splitting the atom had an almost magical grip on the imaginations of inventors and policymakers,” wrote nuclear historians John Byrne and Steven M. Hoffman in 1996. “As soon as someone said–in an even mildly credible way–that these things could be done, then people quickly convinced themselves “¦ that they would be done.”

The newspapers and magazines of the time were filled with features detailing the irradiation of food to preserve it, the development of nuclear medicine, nuclear-powered cars and aircraft, and even radioactive golf balls capable of being found with a Geiger counter. At the peak of this frenzy, the U.S. government launched Operation Plowshare–a series of demonstrations around the potential civilian uses of nuclear explosions. Such was the fever around nuclear energy that a newly designed two-piece swimsuit was even named after the testing site for atomic bombs–Bikini Atoll.

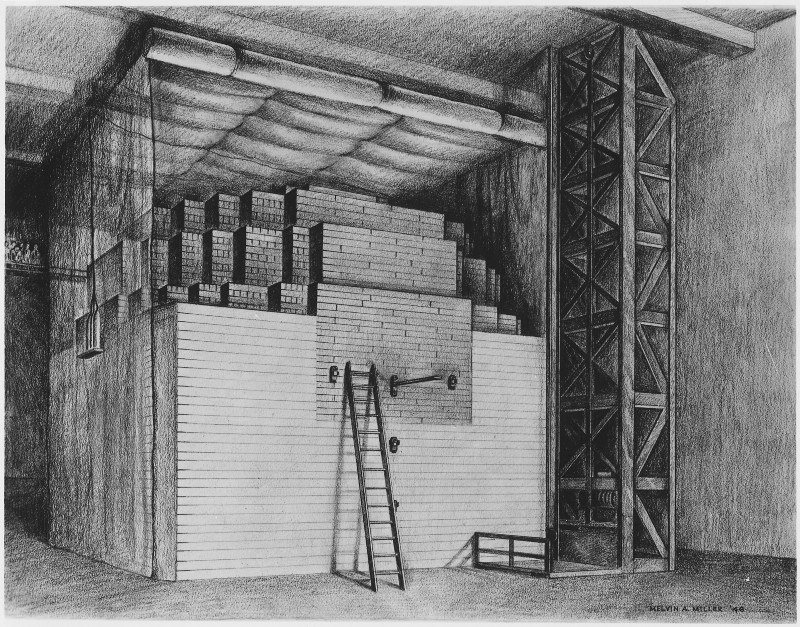

The reality, of course, fell somewhat short. In 1942, Enrico Fermi and a team of researchers at the University of Chicago built the first man-made nuclear power plant in the implausible setting of a squash court. It wasn’t too impressive–the reactor was known as Chicago Pile-1, because it was basically just a pile of graphite bricks and wooden timbers with a few cylinders of pure metallic uranium fuel inside. It ran for about four and a half minutes, producing a measly 0.5 watts–you would have needed 100 such reactors to power a typical household light bulb. Though that wasn’t really the point–the goal was to prove that nuclear chain reactions, then a purely theoretical concept, were achievable in the real world.

There was no radiation shielding or coolant system on the reactor. Instead, Norman Hilberry, standing nearby holding an axe, was in charge of safety. The idea was that he’d use it to cut a rope attached to a control rod suspended above to halt the reaction if something went wrong. To this day, the code for emergency shutdown procedures is SCRAM, which supposedly stands for “safety control rod axe man,” though there is some debate about whether this story is apocryphal.

The scientists also didn’t tell the university’s president about the experiment. Arthur Compton, the responsible officer, later recounted:

“I should have taken the matter to my superior. But this would have been unfair. President Hutchins was in no position to make an independent judgment of the hazards involved. Based on considerations of the University’s welfare, the only answer he could have given would have been no. And this answer would have been wrong.”

After successfully sparking mankind’s first self-sustaining nuclear chain reaction, the assembled scientists celebrated with a bottle of Chianti, drunk from paper cups. They also placed a phone call to James Conant, the chairman of the National Defense Research Committee, speaking in impromptu code. “The Italian navigator has landed in the New World,” reported Compton. “How were the natives?” asked Conant. “Very friendly,” Compton replied.

Generation I

The success of Chicago Pile-1 paved the way for rapid advances in nuclear technology in the United States, leading to the development of the atomic bombs dropped on Hiroshima and Nagasaki at the end of the Second World War. Post-war, while weapons-development continued, there was now a focus on harnessing the power of the atom for peaceful purposes. Work begun on using the tremendous heat released during nuclear fission to generate electricity.

The first nuclear plant to be connected to a national grid was a research reactor in the “Science City” of Obninsk in the Soviet Union–which generated about five megawatts between 1954 and 1959. It was followed by the first industrial-scale nuclear plant at Calder Hall in the United Kingdom in 1956, though this primarily produced weapons-grade plutonium–its 60 megawatts of electricity generation was a secondary bonus.

The first nuclear reactor dedicated solely to peacetime uses was the Shippingport Atomic Power Station, located on the Ohio River in western Pennsylvania. It reached self-sustaining status for the first time on December 2, 1957, fueled by a core that was originally designed for a cancelled nuclear aircraft carrier. Unfortunately, its electricity cost about 10 times that of local coal-fired power. The first privately funded plant opened just a few years later in 1960 in Dresden, Illinois, followed swiftly by the first commercial nuclear plant, Yankee Rowe, in Rowe, Massachusetts.

Today, the nuclear industry refers to these prototype plants as “Generation I.” More proof-of-concept than anything else, they mostly relied on graphite moderators and water for cooling. This was cheap and allowed them to use non-enriched uranium as fuel, but the designs made the components susceptible to corrosion and expansion over time. Despite this major flaw, the last remaining generation I reactor in the West–the Wylfa Nuclear Power Station on the island of Anglesey in Wales–wasn’t shuttered until December 30, 2015. A first-generation experimental reactor at Yongbyon in North Korea was shut down in 2007, but is believed to have resumed operation in 2015.

Generation II

A second generation of nuclear reactors rolled out in the late 1960s. They came in various flavors–pressurized water, boiling water, advanced gas-cooled, and the Soviet-designed Reaktor Bolshoy Moshchnosti Kanalnyy (RBMK) are some examples–but their unifying feature was that they were more economical and reliable than their predecessors. Most were designed with an operational lifespan of 30 or 40 years, allowing the firms that constructed them the ability to take out loans they could repay over the reactor’s period of operation.

Many of the designs from this new generation came with built-in electrical and mechanical safety features that would initiate automatically in the event of danger. In a pressurized water reactor (the type used in most nuclear submarines), for example, the reactor becomes less reactive the hotter the coolant gets–self-regulating to make the core very stable.

In an RBMK reactor, however, the opposite is true; when coolant temperatures increase, the reactor also heats up and becomes more reactive. That design flaw became a problem on April 26, 1986–perhaps the darkest day in the history of nuclear power. In the control room at the Chernobyl power plant in what is now Ukraine, operators detected a sudden and unexpected power surge during routine, low-power testing operations. They implemented emergency shutdown procedures–a SCRAM–by inserting control rods to slow the reaction. But Chernobyl’s rods were tipped with graphite, causing them to increase reactor power output briefly when first inserted.

What happened next is well known. Power output spiked to more than 10 times the normal operational output, leading to a steam explosion that ruptured the casing of the reactor, sending it through the roof of the building while severing most of the coolant lines feeding the reactor chamber.

A few moments later, a second, more powerful explosion occurred that terminated the nuclear reaction and spewed superheated lumps of graphite into the surrounding area–starting fires on the roofs of adjacent reactors. In those reactors, operators were given respirators and potassium iodide tablets and told to continue work.

The explosion and subsequent fire released large quantities of radioactive particles into the air, which then spread with the winds across a huge swathe of Europe and the western Soviet Union. In the aftermath, 32 people died of radiation sickness–most of them fire and rescue workers who were not informed about how dangerous the exposure to the radiation in the smoke was. The total death toll from the accident, however, will likely never be known. Estimates range wildly, from 4,000 to as many as 200,000 people exposed to and killed by radiation over the decades since Chernobyl (though the latter figure relies heavily on non-peer-reviewed sources).

In the wake of the disaster, the international community was stunned. There had been nuclear accidents before–including Windscale in 1957, SL-1 in 1961, and Three Mile Island in 1979–but this was the first time that such a huge area was affected. In total, nearly 62,550 square miles [162,000 square kilometers] of Europe were contaminated with radioactive caesium.

While there had been scattered opposition to nuclear energy before Chernobyl–mostly connected to the growing environmental movement–the accident catalyzed public opinion against the technology. In Rome, almost 200,000 people marched in protest against government plans to build reactors. This public pressure forced many countries to begin rethinking their nuclear programs–slowing, and in some cases halting, the construction of new reactors.

Generation III

During the course of the 1980s and 1990s, scientists continued to refine reactor designs. They substantially improved many facets of the technology–fuel processing, thermal efficiency, operational lifespan (up to 60 years), and safety features. A better understanding of the technologies and materials, thanks to data from the hundreds of operational reactors around the world, allowed researchers to build passive safety systems that require no operator action, or electronic systems to function in the event of an emergency.

Getting these designs built to test them, however, proved far more troublesome in the wake of the Chernobyl disaster. Despite the advancement in purported safety improvements, few people wanted an experimental nuclear reactor in their backyard–meaning that major protests accompanied any proposals to build new power facilities. “Generation III plants were designed, but were never really constructed,” explained Nikolaus Muellner, head of the International Nuclear Risk Assessment Group, an independent body of nuclear safety experts. “From 1990 to 2010, you have a decline in [the] building of new plants.” Of the few that were constructed, mostly in Japan, only four are in operation today.

That’s four out of the 438 nuclear plants currently operating around the world at the time of writing. After reading that statistic, some of you are probably putting two and two together and thinking something along the lines of “wait, so what about the rest?” The overwhelming majority of them are leftovers from generation I and generation II.

Yep, that’s right. Most all of the nuclear reactors in the world today were designed before the 1980s–before the Chernobyl accident. They’re not getting replaced with safer models because the public hates the idea of building new nuclear reactors. Instead, over the years, engineers have given these old power plants incremental safety upgrades to prolong their lives far beyond their intended operating capacities. “There’s only so much you can do to improve the safety of existing reactors,” said Muellner. “If you [talk to the nuclear industry] about safety, then usually you’re told about the safety of generation III nuclear reactors–the current state-of-the-art of safety technology. But we’re not going to see those in the next 20 or 30 years in Europe and the United States.”

That, in a nutshell, is why nuclear energy isn’t getting any safer–because extending the lifetime of an existing plant is a far easier sell to the public than building a new one. But it’s not the whole story. Because to appreciate whether it’s important that nuclear energy isn’t getting safer, we need to talk about how safe it is to begin with.

A brief interlude to ask: How safe is nuclear energy?

It depends what you mean by safe, of course. “If you’re going to ask the [nuclear] industry or a regulator, then you’ll probably get as an answer: “‘Our existing nuclear power plants are safe,'” said Muellner. “The point is that this is really a matter of definition.”

He continued: “What is meant, if you’re saying that something is safe, is that society has set up a set of rules and conventions according to which a nuclear power plant is judged. If the plant passes this test, then it is called safe.” Those rules and conventions differ around the world but mostly boil down to probabilities–whether a nuclear plant can survive an earthquake that happens once every 100,000 years, for example. “This means, from an engineering point of view, that the plans fulfill their probability targets,” Muellner said. “Which means that according to the definition, they’re safe. But this doesn’t mean that an accident cannot happen.”

There’s another problem with relying on probability targets–those probabilities are mutating. Thanks to climate change, a once-in-1,000-years flood is becoming a once-in-100-years flood in many parts of the world, and the industry isn’t really handling these changes. Right now, for example, the Association of Western European Nuclear Regulators requires that power plants account for climate change in their safety analyses. But exactly how to do that is still very much up for discussion, and with the slow pace of new plant construction that discussion isn’t happening very fast.

So to assess the safety of nuclear energy, let’s start with people killed by the the technology. By that standard, nuclear is the safest form of power generation by far. If you run the numbers, as energy researcher Brian Wang did in 2011, you’ll find that atomic energy results in just 0.04 deaths per terawatt-hour produced, compared to 100 for coal and 36 for oil. Even renewables are more deadly than nuclear, resulting in 0.44, 0.15, and 1.4 deaths per terawatt-hour produced for solar, wind, and hydro, respectively. Other studies have come up with different numbers but the same conclusions.

Take the 2011 Fukushima-Daiichi nuclear accident, for example–which had a similar influence to Chernobyl on public opinion. Nobody died as a result of radiation exposure–though six were exposed to doses beyond the lifetime legal limits. Predicted future cancer deaths in the population living near Fukushima range from none to a few hundred. A few hundred deaths is still a few hundred too many, but compared to the 13,000 premature deaths caused every year by air pollution from coal-fired power stations in the United States alone, it’s a drop in the ocean. The dangers of nuclear power, in terms of mortality, are significantly overblown.

That fact, however, won’t be of much comfort to the 300,000 people in Japan who were forced to leave their homes as a result of the Fukushima disaster, or the approximately 1,600 who died as a result of the evacuation conditions. The survivors won’t be able to return for decades, and the trauma of the disaster has caused rates of mental illnesses among the evacuated population to rise fivefold over the Japanese average.

Following Chernobyl, a similar number of people were displaced. While most received very low doses of radiation, the towns where they lived will be contaminated for generations. Across Europe, areas of land where radioactive particles fell in substantial amounts are still subject to farming restrictions 25 years after the disaster. It’s clear that when assessing the safety of nuclear energy, we’ve got to look well beyond deaths caused by radiation.

Ultimately, the question of how safe nuclear power is depends on your personal perception of risk. While accidents are far less common with nuclear energy than other forms of power generation, those accidents tend to have wide-ranging and hard-to-predict consequences. Is that better or worse than the alternatives? The lengthy process of society’s attempt to answer that question is at the very heart of the problems facing the nuclear industry today.

Generation III+ and beyond

So, as established earlier, nuclear power is trapped. The public won’t allow new nuclear power plants to be built because of a perception that they’re unsafe, and the safety of power plants can’t be substantially improved without building new ones. The “nuclear renaissance” the industry promised for so many years is looking as far away as ever.

Is there a way out of this mess? Perhaps. But it doesn’t lie within the United States and Europe. In China and India, public opinion is less of a factor in political decision-making, and both nations are conducting aggressive rollouts of nuclear technology. China currently operates 29 nuclear plants and plans to more than double that figure before 2020, while India runs 21 reactors with another 20 planned in the coming years.

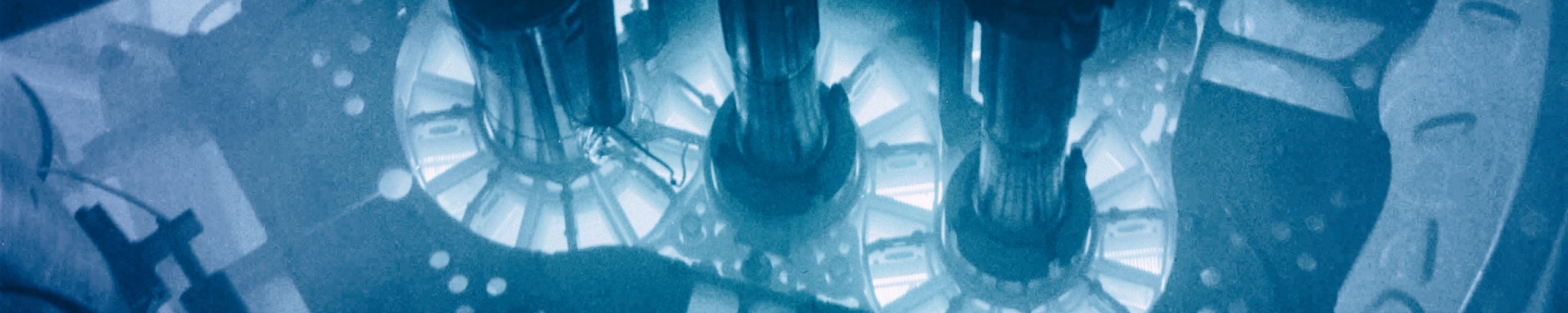

Crucially, these are not the generation II reactors that were involved in the Chernobyl and Fukushima accidents. They’re not even the unbuilt generation III ones–they’re known as generation III+, an evolutionary development of the generation III reactors that offer further improvements on the same basic technology. Those improvements include further passive safety features (like multiple barriers against the release of radioactive material and coolant reserves stored above a reactor that would fall onto it in the event of an accident), as well as lower fuel consumption and waste production.

Once these nuclear plants are built, they should be–on paper at least–significantly safer than older ones, which (as noted above) already have pretty low accident rates. With generation III+ plants, those rates should drop even more–and if that translates into no major incidents in the coming decades, public opposition may fade and nuclear could finally get a shot at its long-delayed renaissance.

By the time it does, we’ll be using generation IV reactors, though. Nuclear scientists have signed off on generation III for now, believing the designs are about as good as they can be. Meanwhile, contemporary research focuses on completely different technologies, like molten salt and fast-neutron reactors, which are more economical, have longer lifespans, and are safer still than their forebears. But once again, for progress to substantially advance, they’ll need to get built–and most experts think that even this step is still two to four decades away.

By then, it might be too late for nuclear. Already, solar power is reaching grid parity with fossil fuels in many parts of the world, and the relative ease of building a few photovoltaic panels compared to a huge nuclear plant means that solar can be far more nimble. Combine that with the global momentum behind solar and other renewables, and nuclear isn’t looking like a good bet for investors. Plus, even if the public was clamoring for more nuclear power, potential builders would need to contend with a laundry list of other issues–high upfront costs, stringent safety regulations, radioactive waste, and the risk of weapons proliferation.

So from a wider perspective, it doesn’t actually matter that nuclear energy is getting safer, nor that it was pretty safe to begin with. Nuclear just can’t meet the needs of modern society in the same way that renewables can. The dreams of the atomic age are dying.

But hey–why worry about the dreams of the past? We have the renewable, sustainable dreams of the future instead.

How We Get To Next was a magazine that explored the future of science, technology, and culture from 2014 to 2019. This article is part of our Power Up section, which looks at the future of electricity and energy. Click the logo to read more.