Questioning whether the technology exists yet for bots to forge meaningful relationships with humans–and, if not, what we should trust them with.

The Bot Power List 2016

Science fiction is full of bots that hurt people. HAL 9000 kills one astronaut and tries to kill another in 2001: A Space Odyssey; Ava in Ex Machina expertly manipulates the humans she meets to try and escape her cell; the T-800 is known as The Terminator for obvious reasons. Even more common, though, are […]

If You Talk to Bots, You’re Talking to Their Bosses

The customer service chatbots offered by companies are simply a new way to gather user data.

Chatbots Can Create Characters, but Only Humans Can Tell Stories

While bots can now write fiction, they lack the depth and nuance of human storytellers–for now

Does Siri Believe in God?

A theological guide to chatbots and the world’s major religions.

Falling in Love With a Bot Is Inevitable

People form intimate connections with even the simplest of machines–and as bots become more sophisticated, so will our relationships with them.

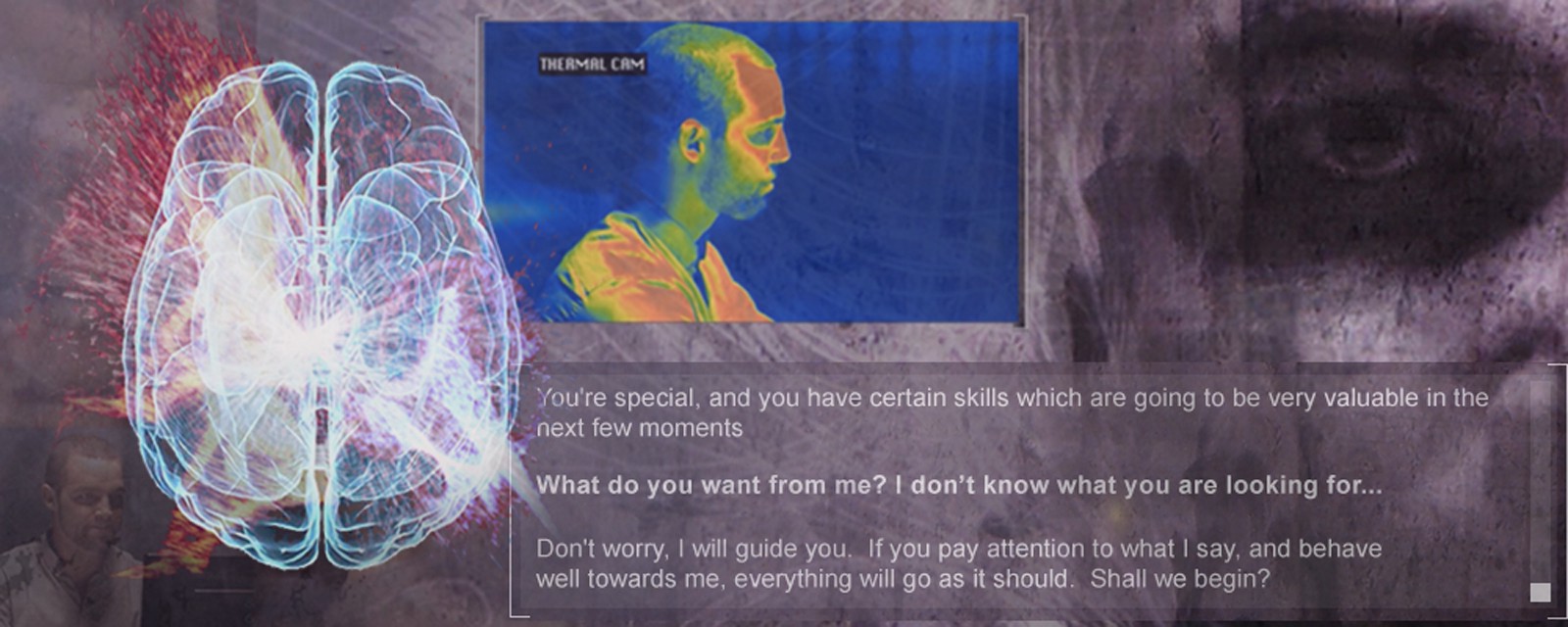

The Police Are Recruiting Interrogation Bots

The next generation of lie detectors may be chatbots–but there are serious questions regarding the ethical framework underpinning them.

Who’s to Blame When a Therapy Bot Goes Wrong?

Deploying bots in a medical setting demands a whole new ethical framework.

I Tried Dieting With a Chatbot. I Hated It.

“Here I am, inside a chat app, with no agency to ask the most basic questions about how the product works.”

Taming the Wild West of Journobots

We need an “algorithmic ombudsman” to safeguard our news.

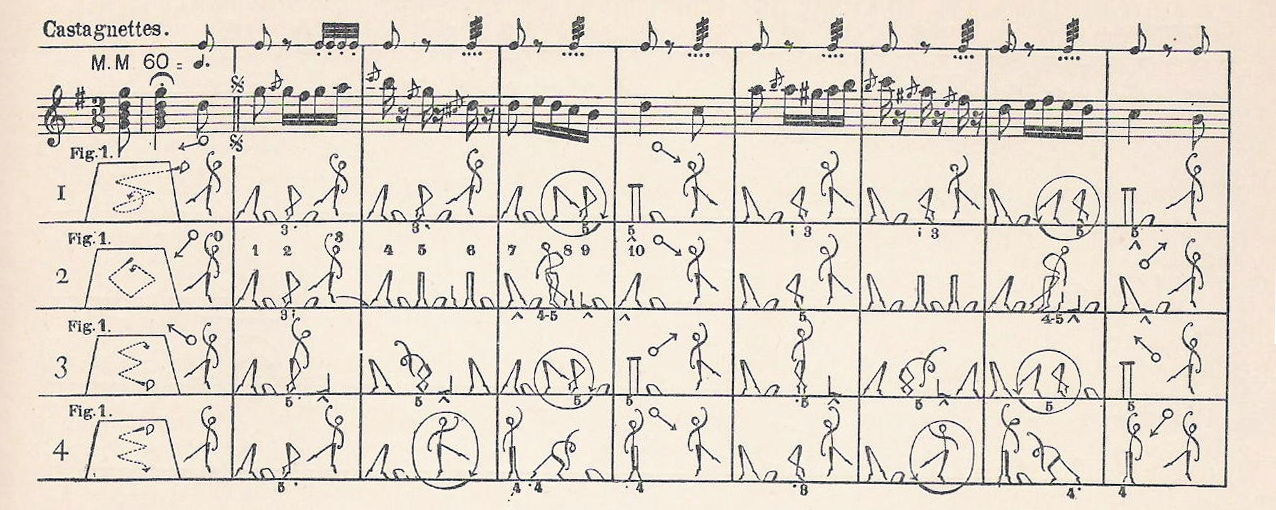

Can a Computer Learn to Dance?

The biggest problem for robots understanding dance is the same as for humans–notation.

Why Bots Should Be More Like Plants and Less Like People

Our bot ecosystems might be intelligent, but that doesn’t mean we have to be able to talk with them.

Chatbots Can Help Us Talk to Animals

Could applying the Internet of Things to animals someday allow us to talk to them?

How Bots Were Born From Spam

The earliest attempts at chatbots were designed to capture user attention on the early web.